gRPC is a modern, open source Remote Procedure Call (RPC) framework that can run in any environment. It enables client and server applications to communicate transparently, and makes it easier to build connected systems. This blog post explores the key features and benefits of gRPC, compares it with REST, and explains how you can use it in your projects.

Before gRPC, Let’s Explain RPC!

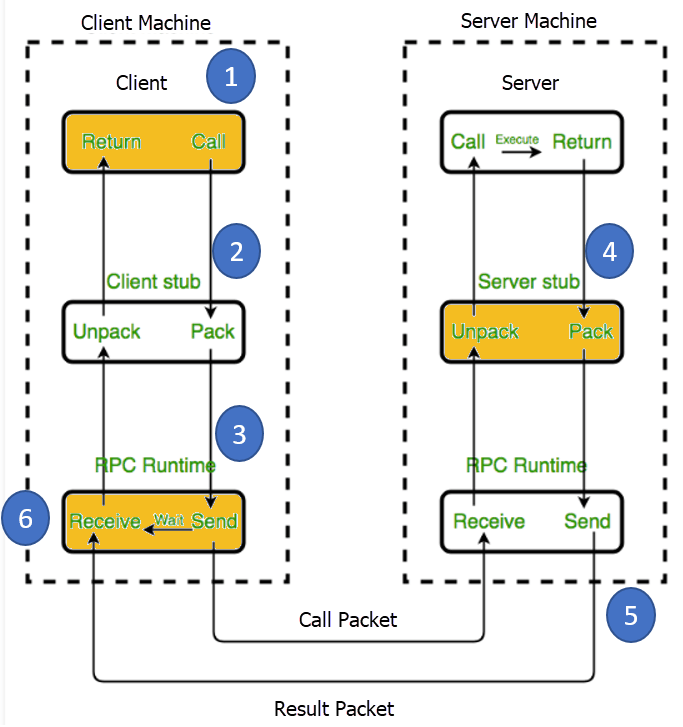

RPC extends conventional local procedure calling so that the called procedure doesn’t need to live in the same host/address space as the calling procedure. The two communicating processes might be on the same system or they can live in different systems with a network connection in the between.

In other words it’s like a form of client-server communication that uses a function call rather than an usual HTTP call. It makes use of IDL (interface definition language) as a contract on functions called and data types returned.

gRPC mirrors this architectural style of client-server communication also using function calls. So, in reality, gRPC isn’t the only fish in the sea, but it adopted this technique and made it better in a way that made it super popular now.

What’s gRPC?

gRPC (pronounced Jee-Arr-Pee-See) doesn’t stand for Google Remote Procedure Call as many people might think. So what the g actually stands for?

Google changes its meaning in every version they release. g actually started meaning gRPC, and then it evolved to good, green, gentle, and more – to the point that they even wrote a README file to list all the meanings.

gRPC is a high-performance, open source RPC framework released by Google in 2015. It’s currently a Cloud Native Computing Foundation project and Google has been using a lot of the underlying technologies and concepts for a long time. Several of Google’s cloud products use the current implementation.

Companies such as Square, Netflix, CoreOS, Docker, CockroachDB, Cisco, Juniper Networks have been also using this technology.

It enables communication between client and server applications using a simple and efficient protocol, making it ideal for building distributed systems and microservices.

gRPC uses Protocol Buffers (aka protobuf) as its default serialisation framework and HTTP/2 as its underlying transport protocol, providing high performance and efficiency.

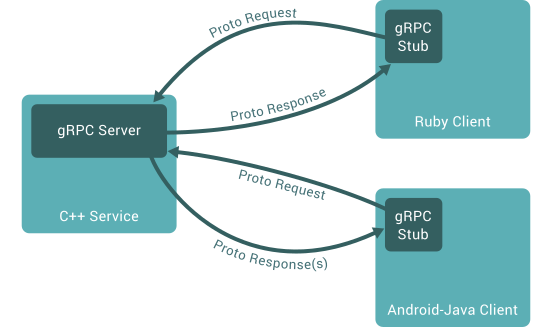

The gRPC architecture entails a client-server model. A client sends the server a request and the server replies back.

The communication between both is responsibility of gRPC application and its corresponding service definitions. These service definitions in .proto files contain information on the methods, arguments, and return types.

The application and its code is auto-generated and acts as a client-side proxy making remote calls look and behave like local function calls.

The gRPC framework abstracts away the complexity of the communication. You know nothing more than the client calls the service method, and the method runs on the server. The magic that plays out in-between isn’t open to you.

So why is this so popular? Let’s take a look at some key benefits and features.

Features and Benefits

High Performance Transfers

gRPC makes use of a binary serialization format (Protocol Buffers) which results in faster serialization and parsing compared to traditional text-based formats like json or xml as well as smaller message sizes. This makes the transmission more efficient, with lower resources while still saving bandwidth.

json payload (79 bytes):

{

"age": 35,

"first_name": "Renato",

"last_name": "Cardoso"

}

protobuf payload (19 bytes):

message Person {

int32 age = 1;

string first_name = 2;

string last_name = 3;

}

Multiplexing

gRPC makes use of HTTP/2 protocol, which is a big enhancement over HTTP/1.1. HTTP/2 introduces features such as request and response multiplexing over a single connection, header compression and server push. This results in a reduction on latency and network transfer speeds while improving overall performance.

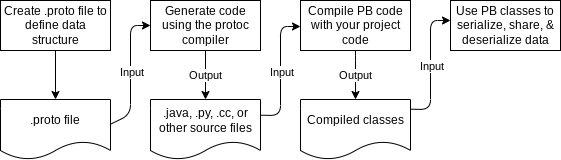

Language-Agnostic & Code Generation

gRPC uses Protocol Buffers to define messages and services, which compile into code in a variety of programming languages including Java, C++ and Python. This makes communication between services simple and flexible regardless of the language used for development. It also allows developers to compile their code in their preferred language while reducing the amount of boilerplate code needed to write.

Cross-Platform

gRPC supports running on multiple platforms, including Linux, Windows, and macOS. This makes it easier to develop and deploy services on a variety of environments.

Secure

gRPC considers security to be a first class citizen. It supports built-in SSL/TLS encryption for secure transfers and mutual authentication.

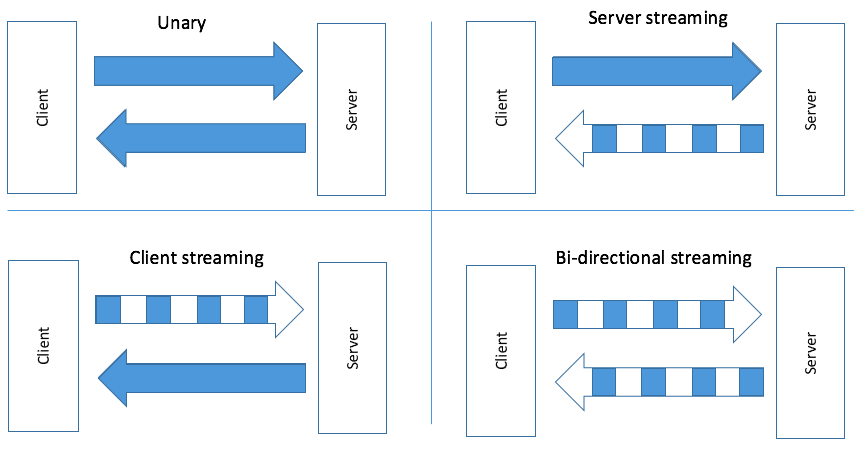

Streaming Support

gRPC provides a number of different types of APIs, including unary, server streaming, client streaming and bidirectional streaming. These APIs allow for a variety of different communication patterns between the client and server.

- Unary RPC: in this case, the client sends a request message to the server and receives a response - simplest form of RPC.

- Server streaming RPC: in this case, the client sends a request message to the server and receives a sequence of responses.

- Client streaming RPC: in this case, the client sends a sequence of messages and receives a single response from the server.

- Bidirectional streaming RPC: in this case, the client and the server exchange messages in both directions. This one is the most complex because client and server keep sending and receiving multiple messages in parallel and with arbitrary order. It’s flexible and non-blocking which means both sides don’t need to wait for the response before sending the next messages.

Strong Community Support

Because it’s developed by Google and gained a lot of traction by the community, gRPC is being widely adopted and keeps being continuously improved by developers and organisations.

When To Use gRPC Over REST

gRPC and REST are great for building APIs, and the decision of when to use gRPC over REST really depends on your requirements.

Here are some use cases in which gRPC might be a better choice:

- Performance and efficiency: If you need low-latency communication with high throughput, gRPC is the way to go. It’s designed for it since it uses

HTTP/2with support for multiplexing and binary serialization making it more efficient in terms of data transfer and latency. - Strongly typed contracts: gRPC relies on protocol buffers for service contracts definition. This allows for strong typed APIs with clear data structures and services, enabling better code generation, type safety and easier evolution.

- Code generation: If you want to be fast and decouple the programming language from the protocol, gRPC has your back. It supports automatic code generation for client and server which can save development time and reduce the chances of making errors.

- Bidirectional streaming: gRPC supports bidirectional streaming where both the client and server can send multiple messages in parallel. This is useful for use cases like live data streaming, chats or even notifications.

- Middleware and interceptors: gRPC provides great support for implementing middleware and interceptors allowing you to add functionality like authentication, logging and monitoring in a consistent way.

Nevertheless, REST might be a better choice in other situations:

- Simplicity and wider adoption: REST is simpler to set up and can be an excellent choice for small/internal APIs or simple services. It’s currently widely adopted and understood in the industry.

- Compatibility: REST is well suited for integration with existing systems, as it’s supported by nearly all programming languages and platforms being one of the best examples the support of all current browsers.

- Readability: REST APIs use human-readable formats like

jsonorxml, which can be helpful for debugging and manual testing.

Ultimately, the choice between both depends on your project’s requirements. You could as well use a combination of both as long as it makes sense for your particular scenario. If you prioritize performance, efficiency, and strong typing, gRPC may be the better choice. If simplicity and compatibility with existing systems are more critical, REST may be the way to go.

Why We Use gRPC at Flutter

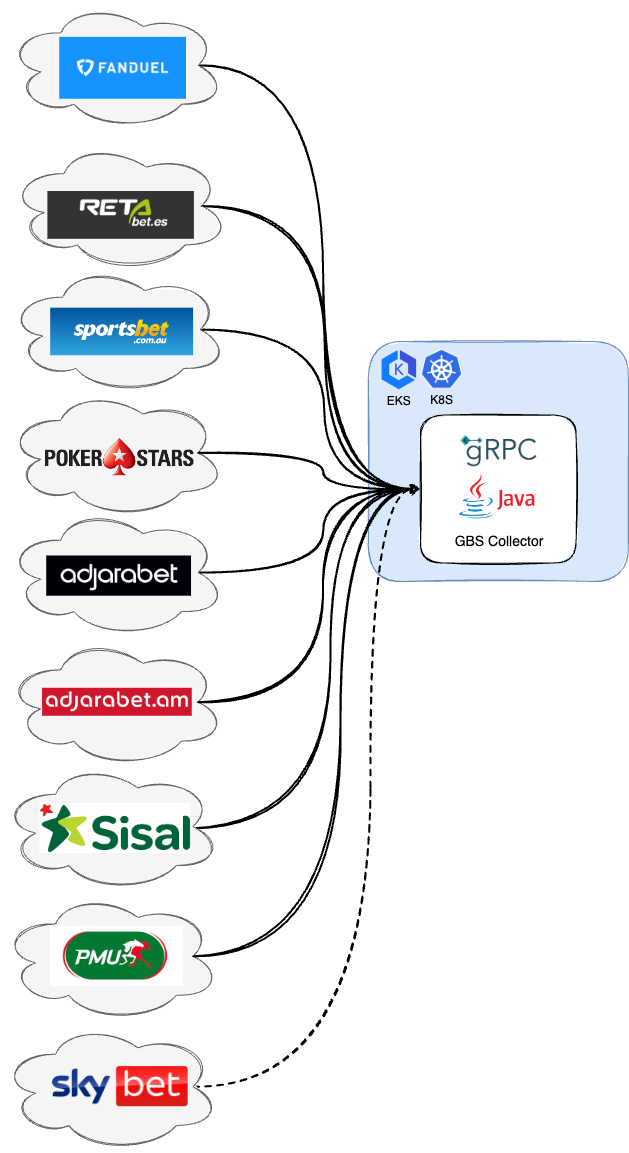

Flutter is a global sports betting, gaming, and entertainment provider. We operate some of the world’s most innovative, diverse, and distinctive brands with over 18 million customers worldwide.

Our customers place billions of bets every year across all brands. Each brand operates individually however Flutter needed to have a centralised place where it could collect every placed bet and process it centrally.

To achieve it, we decided to build an application called GBSC (Global Bet Stream Collector) which has the following architecture. For simplicity let’s look at some brands only.

The requirements were very clear and well defined. Let’s look at the most important ones:

- Ability to ingest a big amount of bets that may come from various brands operating in distinct parts of the world (countries/continents).

- Data transmission must have as low latency as possible because there might exist some automated actions right after processing the data.

- Individual access control for each brand.

- Same

Betmodel used across all brands in order to deal with data in a single format. - Possibility of model evolution.

- Give the possibility for each brand to choose their preference in what concerns the place to deploy their integration application, the language of choice and as well as the operating system.

- Data encryption in transit.

Looking at these requirements it’s quite easy to understand gRPC ticks pretty much all of them. So relating to the previous requirements gRPC answers to them:

- By using unary or bidirectional streaming calls, it can achieve this with the help of persistent connections in addition to asynchronous API calls.

- By using

HTTP/2persistent connections, it’s able to perform SSL handshake just once avoiding all the network round trips that normally exist in this process. Using a binary data model also allows to keep message size smaller. - By using interceptors, it can easily validate against some HTTP headers (e.g.

Authorisationheader with JWT token) in order to authorise the call. - By using

protobuf, it can easily build a library in any gRPC supported language and distribute it to the brands, in a way that they can import it on their projects. It’s definitely better approach than distributing the protobuf files themselves. - By using

protobuf, it can use its backwards and forwards compatibility. As long as we follow some simple practices when updating.protodefinitions, old code reads new messages without issues, ignoring any newly added fields. To the old code, deleted fields have their default value, and deleted repeated fields are empty. We currently make use of proto-backwards-compat-maven-plugin to help us not make mistakes. - By using

protobuf, it can generate code in any language supported by gRPC. This provides great flexibility for each brand to opt by its language of choice and its deployment preference. gRPC is also supported in all big cloud providers. - The

HTTP/2protocol and TLS/SSL encrypts data in-transit which helps to mitigate spoofing attacks by robustly encrypting and authenticating transmitted data, preventing interception of traffic and blocking the decryption of sensitive data on the bet payload or authentication tokens.

Using gRPC

In order to use gRPC in your own project, you need to define your services and messages using a .proto file. This file defines the methods and parameters for your services, as well as the messages exchanged between the client and server.

Once you’ve defined your .proto file, you can use the gRPC tools to generate client and server code in your preferred programming language.

To run your gRPC service, you need to start a gRPC server that listens for incoming requests. Then, you can then start your client and make requests to the server.

Let’s look at a service definition in detail:

bet-service.proto

syntax = "proto3";

package com.flutter.gbs;

import "bet.proto";

service BetService {

// Unary call to send a Bet message and receive a BetAck message back

rpc sendBet(Bet) returns (BetAck);

// Bi-directional stream calls to send multiple Bet messages and receive a stream of BetAck messages back

rpc sendBets(stream Bet) returns (stream BetAck);

}

This code block shows a RPC service BetService definition that inside declares 2 methods: sendBet and sendBets (notice the service keyword before declaring each service and rpc keyword before any method).

Each method has an input argument as well as a response. Besides the name, the big difference between the two is the keyword stream. This tells you that on the sendBets method you are making use of bidirectional streaming of request and response messages instead of the traditional unary calls.

bet.proto

syntax = "proto3";

package com.flutter.gbs;

message Bet {

// Unique ID from brand bet platform used to identify the bet placed

string betId = 1;

// Timestamp of when the bet was placed

int64 betTime = 2;

// Amount staked on the bet

double totalStake = 3;

}

message BetAck {

// Status of the response, enum of OK or ERROR

Status status = 1;

// Error message if occurred

string error = 2;

enum Status {

OK = 0;

ERROR = 1;

}

}

Here is the definition of the Bet and BetAck message types. Each property has its type, which can be primitive, or another custom type, such as Status.

After compiling this, the server implementation could be as follows. Here you are just building a successful response without any business logic - this is for demonstration purposes.

BetService.java

package com.flutter.gbs;

import com.flutter.gbs.BetOuterClass.Bet;

import com.flutter.gbs.BetOuterClass.BetAck;

import com.flutter.gbs.BetServiceGrpc;

import io.grpc.stub.StreamObserver;

import lombok.extern.slf4j.Slf4j;

@Slf4j

public class BetService extends BetServiceGrpc.BetServiceImplBase {

public BetService() {

}

@Override

public void sendBet(Bet bet, StreamObserver<BetAck> responseObserver) {

responseObserver.onNext(

BetAck.newBuilder()

.setStatus(BetAck.Status.OK)

.build());

}

@Override

public StreamObserver<Bet> sendBets(StreamObserver<BetAck> responseObserver) {

return new StreamObserver<Bet>() {

@Override

public void onNext(Bet bet) {

responseObserver.onNext(

BetAck.newBuilder()

.setStatus(BetAck.Status.OK)

.build());

}

@Override

public void onError(Throwable t) {

log.error("Received error from streaming client", t);

responseObserver.onError(t);

}

@Override

public void onCompleted() {

log.info("Received shutdown from client");

responseObserver.onCompleted();

}

};

}

}

Conclusion

gRPC and protobuf are powerful tools for building efficient and scalable distributed systems. They provide a fast and efficient way to communicate between services, support backward and forward compatibility, and provide a simple and language-agnostic way to define your data structures. If you are building a distributed system, it’s worth considering using gRPC and protobuf to help you achieve your goals.

Regarding using gRPC or REST, both are useful for building distributed systems, but they differ in their approach to communication and data transfer. REST is simpler and more widely supported, making it a good choice for public APIs or web applications. gRPC is more efficient and supports bidirectional streaming, making it a good choice for internal APIs or high-performance applications.

When choosing between gRPC and REST you should take into account the requirements of your project and choose the technology that best meets your needs.

by: Renato Cardoso

in:

tags: Flutter Global Tech

category: Story